The Moral Labyrinth of AI: Ethical Dilemmas in Autonomous Systems

Does the car save its passengers or pedestrians? And who is to blame?

Artificial intelligence, once considered science-fiction, is now part of our daily life: autonomous driving, healthcare diagnostics, advisory services, and investment decisions are only a few of the sectors and activities in which A.I. makes both simple and complex decisions. Its ability to make choices independently, raises important ethical questions about our interactions with it. How can we hold A.I. accountable for decisions it makes? And what might the butterfly effects of unregulated A.I. systems be?

An astronaut in space. Mike Winkelmann. Behance/Wikimedia.

In traditional decision-making, identifiable human beings can be held accountable for their choices. A.I., however introduces novel challenges in legally and morally assessing responsibility. As algorithms become more complex and decisions more opaque, determining who is ultimately accountable for an A.I.’s decisions becomes a formidable task. When an autonomous vehicle makes a life-or-death decision, who is responsible? The programmer? The manufacturer? The A.I. itself? This accountability vacuum underscores the need for frameworks that ensure transparency and clear lines of responsibility.

Science fiction author Isaac Asimov’s Three Laws of Robotics, which articulate the fundamental principles that should govern human-robot interactions, can offer a beacon of ethical guidance for interacting with intelligent machines. . The First Law states that robots may not harm human beings or, through inaction, allow human beings to come to harm. The Second Law focuses on the robot’s duty to obey human commands, except when it conflicts with the First Law. Lastly, the Third Law asserts a robot’s self-preservation so long as it does not conflict with the First or Second Laws.

A.I. systems are not robots, but in their essence, these laws offer important lessons for human-A.I. interaction. The First Law emphasizes human safety and well-being, and we too must prioritize human welfare in A.I. decision making. The Second Law underscores human control over A.I., just as we must guarantee human oversight and control over A.I.-driven decisions. The Third Law emphasizes self-preservation. Our A.I. models should adhere to ethical guidelines even in their self-improvement processes.

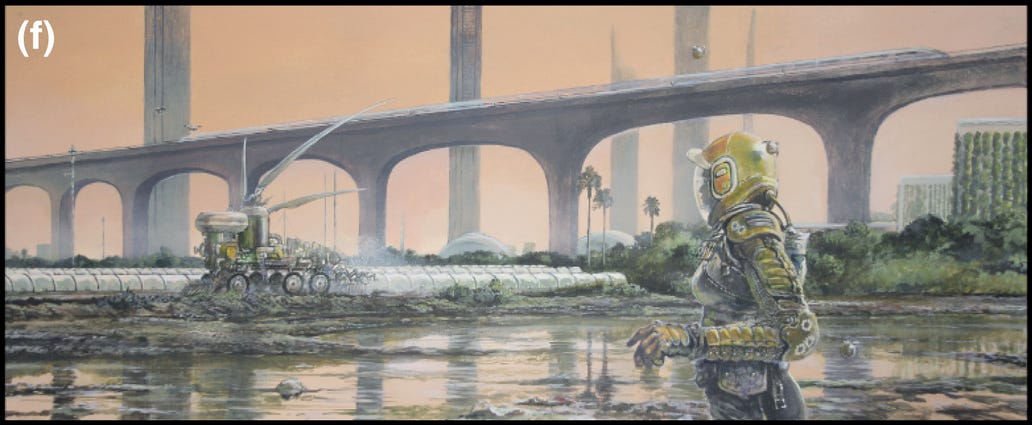

Outdoor agriculture in India in 2500. James McKay, 2021, Wiley Online Library/Wikimedia.

As we integrate A.I. into our lives, we confront another critical issue: how to preserve individual privacy amidst A.I. proliferation. A.I. systems are interconnected, and they rely on vast amounts of data to learn, make predictions, and optimize processes. Keeping this data private and access to sensitive information secure is a foremost concern.

A.I. systems thrive on collecting, storing, and analyzing data, but this reliance inevitably exposes individuals to privacy breaches, including unauthorized surveillance, data leaks, and identity theft. . Tackling this privacy puzzle is difficult; on the one hand we want to ensure data remains private and secure, while on the other, innovation requires allowing new technologies to process available data. Healthcare systems illustrate this transparency dilemma particularly well: they aim for full protection of patient medical records, while also allowing the data sharing that may improve patient care. The right balance between privacy and transparency has yet to be reconciled.

A.I. decisions can lead to unpredictable consequences. The algorithms that govern self-driving cars, for example, grapple with complex and ethically charged moral dilemmas. Consider this scenario: if a self-driving car must make a choice between protecting its occupants or pedestrians, whose lives should be prioritized? The decision itself, reducible to a few lines of code, has far-reaching ramifications that can affect the public’s perception of A.I., how safety regulations are determined, social norms and values, as well as challenge the existing legal system. A.I. tasked with solving complex ethical questions, such as how limited medical resources are allocated during a crisis, requires an ethical framework that would help navigate currently unimaginable scenarios.

An alien invader watches unaware drunks, The War of the Worlds, H. G. Wells, illustration by Henrique Alvim Corrêa, 1897. The British Library/MonsterBrains.

The domino effect is unpredictable, necessitating a more holistic approach to A.I. decision-making and demanding the cooperation of developers, ethicists, policymakers, and the public to solve. Traditional linear thinking may be insufficient when faced with A.I.-related complexities.

Integrating ethics into A.I. is increasingly becoming a pressing challenge. Solving it requires a comprehensive approach that enables societies to collaborate in finding optimal solutions to existing and future problems. Failing to address transparency in A.I. decision-making processes will undermine public trust and accountability. Developing explainable A.I. models that trace how the algorithm makes decisions can help bridge the gap between A.I.’s complexity and human comprehension.

We must also establish ethical guidelines and regulations that build a framework for A.I. development that encompasses principles such as fairness, privacy, and human rights. A.I. should reflect the principles each society values, and ensuring this requires establishing interdisciplinary collaborations between technologists, ethicists, policymakers, and the broader public. We can’t ignore the ethical considerations and challenges surrounding A.I. As A.I. systems gain autonomy and influence, our responses to these ethical dilemmas will define the strength of the effect this technology will have on our lives. As we navigate this labyrinth, we must maintain a multidisciplinary approach that addresses privacy concerns, fosters transparency, and establishes ethical guardrails. By ascribing the principles in Asimov’s Laws to A.I., we can help ensure it is always under human control and is helping, instead of harming, our lives.